What CRO actually is (and why most "CRO experts" don't do it)

What CRO actually is (and why most "CRO experts" don't do it)

A plain-English look at the discipline, what real CRO work looks like, and the 5 questions to ask any "expert" before you hire them.

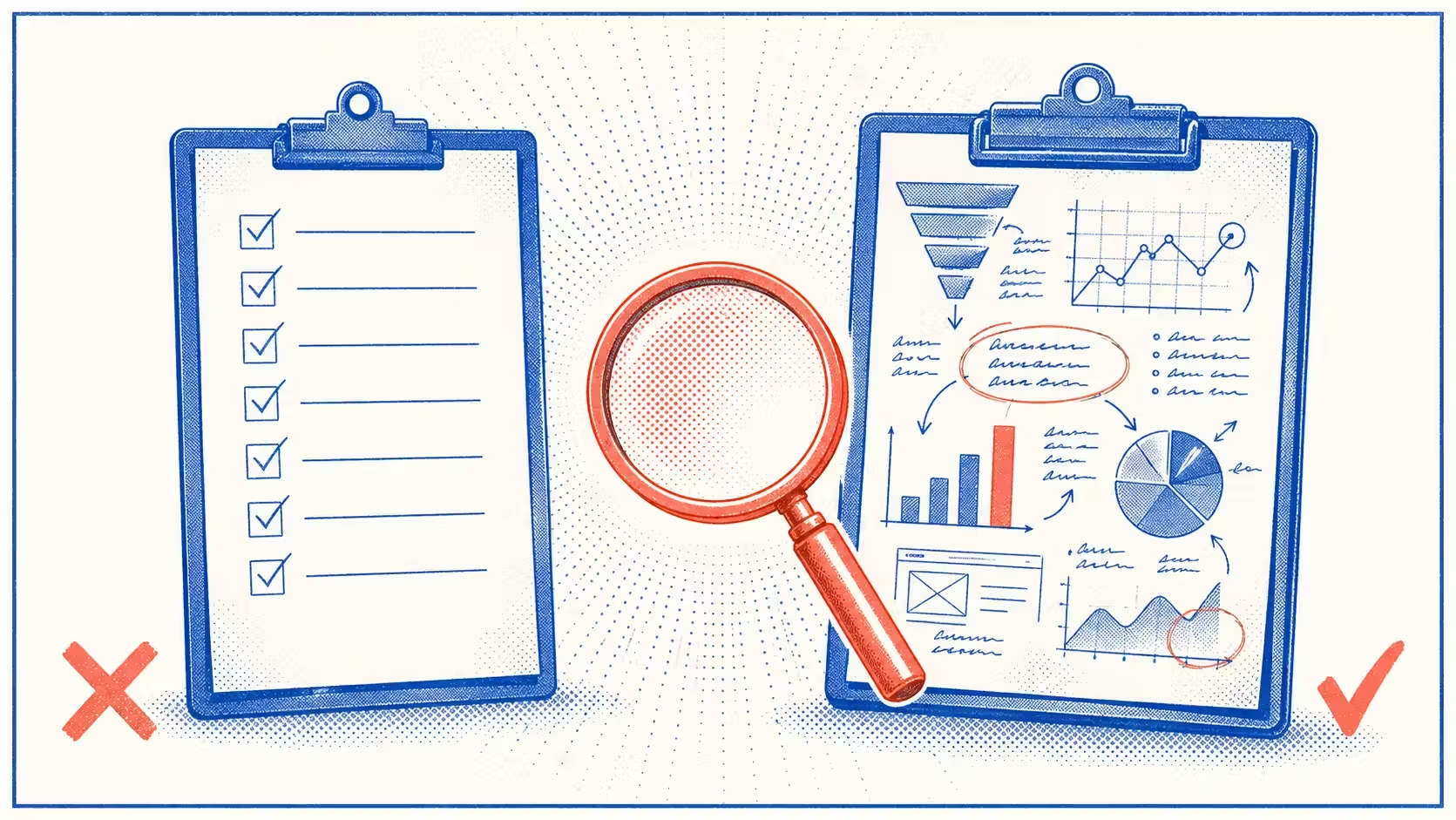

TL;DR. Conversion Rate Optimization (CRO) has become one of the most hollowed-out terms in the Shopify operator world. Most paid CRO services send back a PDF of generic best-practice suggestions, charge a four-figure fee, and never name the specific problem on the specific store — usually because they never looked at the actual funnel data in the first place. Operators get a checklist; their conversion rate doesn't move. Real CRO is a discipline, not a checklist — research grounded in real funnel data, a specific hypothesis, a test, an honest measurement. This article walks through what that discipline actually looks like, the 5 questions to ask any "CRO expert" before you hire them, and why the cheapest real CRO work most operators can do is run the diagnosis themselves first.

What CRO actually is

The honest definition of CRO, with no marketing varnish: it's the discipline of changing a website to get more of the visitors you already have to take the action you want them to take — and measuring that change rigorously enough to know it actually worked.

That definition has four working parts. If a "CRO" engagement skips any of them, what you're getting isn't CRO — it might be design, it might be copywriting, it might be a lucky guess. It's not the discipline.

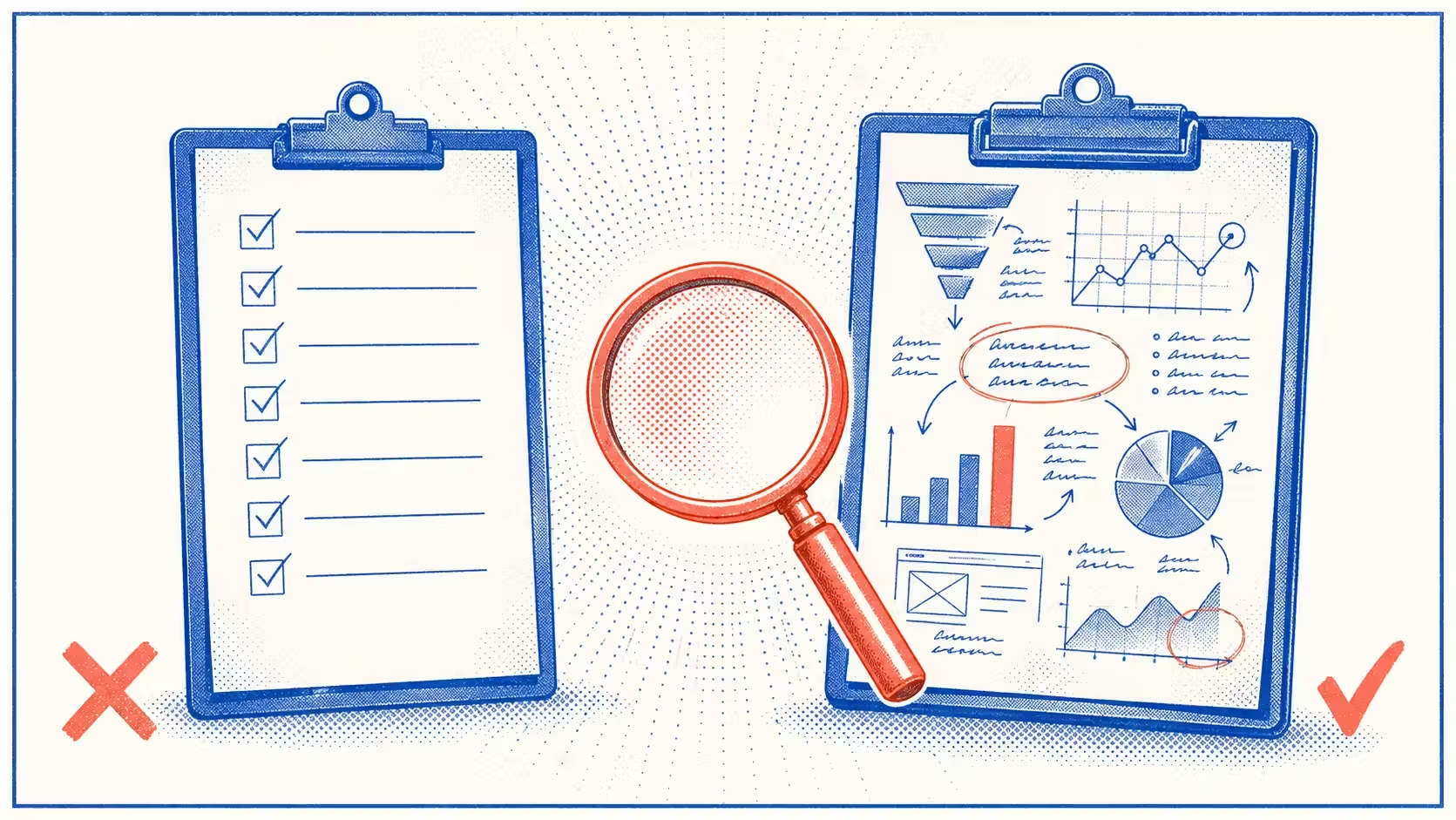

The four parts, in order:

1. Research grounded in real funnel data. Before any recommendation gets made, the work is to look at what visitors are actually doing on the store. That means pulling drop-off rates at every funnel stage from the analytics layer (Shopify Analytics, GA4, Triple Whale, whatever the store runs on), watching session recordings of real shoppers on real product pages, reading support tickets for the most-asked questions, and running post-purchase surveys to hear in customers' own words why they did or didn't buy. The output of this step is a list of specific places in the funnel where visitors are dropping off — not a feeling, but a number, attached to a specific page or step.

2. A specific hypothesis written in plain English. Once the data is in, the next step is naming a falsifiable belief about why visitors are dropping off where they are. The bar for a real hypothesis is that a 12-year-old could repeat it back: "Visitors are dropping off the product page because the shipping cost isn't visible until checkout — making them feel ambushed at the final step." That's a hypothesis. "Improve the user experience to drive conversions" is a wish.

3. A test that isolates the variable you're changing. A controlled experiment — ideally a real A/B test, but pragmatically a deliberately scoped change you can isolate the effect of. Most stores under $5M revenue can't run statistically valid A/B tests on most pages — they don't have the traffic. That's a real constraint, not a stop sign. The discipline still applies; the rigor adapts. The point is that the change is small enough and tracked closely enough that you can attribute the result to it.

4. An honest measurement, with a roll-forward decision. After the test runs, look at the result honestly: did the metric you said you'd move actually move? If yes, ship the change permanently. If no, kill it and write down what you learned. If unclear, run it longer. Most paid CRO services skip this step entirely — they hand over the change list and disappear. The discipline lives in the loop.

Without all four, it's not CRO.

What "CRO" usually is, in practice

What most paid CRO services actually deliver: a PDF of generic suggestions sent within a week of the engagement starting, with no underlying funnel research about why those specific changes will help this specific store. The recommendations are recognizable — change the CTA color, add trust badges, shorten checkout, enable Shop Pay first, add an exit-intent popup. Shopify operators we've talked to call this "checklist CRO," and it's the dropshipping-of-CRO: every store gets the same advice.

The reason it's so common is structural. Real CRO research is expensive and slow — you can't bill for the hours an analyst spends reading session recordings or pulling event data, so the freelance market on Fiverr and Upwork has converged on the only deliverable that scales: a generic checklist. AI tools have made the checklist version trivially easy to generate. Most operators can't tell from the deliverable alone whether the recommendations were grounded in real research, until weeks after the engagement when their conversion rate hasn't moved.

A checklist isn't useless. Some of the suggestions in any generic CRO deck might genuinely help. The problem is that the operator has no way to know which ones — because nobody named which problem is dominant on their store. They ship six fixes; one of them maybe moves the number; the other five were noise. And because measurement was skipped, nobody can tell which was which.

The diagnosis matters more than the fix. The fix without the diagnosis is just a hope.

The 5 questions to ask any "CRO expert" before you hire them

Print this list and run any prospective "CRO expert" through it before you sign a contract — whether they're on Fiverr, Upwork, or running a boutique agency.

1. "Before recommending changes, what specific research will you do on my store?"

Real answer mentions pulling funnel data from your analytics (Shopify Analytics, GA4, your event tracking layer), reviewing session recordings, reading support tickets, running post-purchase surveys, and benchmarking your data against industry baselines. They should ask what tools you currently have set up and what data they'll have access to.

Red flag answer: "I'll do a CRO audit using industry best practices." That's a checklist with a uniform on. It's not research; it's the absence of research.

2. "What's an example hypothesis you'd write before changing anything on my product page?"

Real answer is a specific, falsifiable belief in plain English, ideally with a reason attached: "I hypothesize that visitors are abandoning the product page because the shipping cost isn't visible until checkout. The funnel data will tell us — if reached-checkout-rate is healthy but reached-checkout to purchase is low, that's the signal."

Red flag answer: "Improve the user experience to drive conversions." That's not a hypothesis. That's a marketing-deck filler.

3. "How will we know if a change worked?"

Real answer is a specific metric, a specific window, and a specific decision rule — all tied to your actual funnel: "We'll track add-to-cart rate over a 30-day window, expect a 10–15% lift, and roll back the change if we don't see at least a 5% lift." If they don't have access to the tracking layer to actually measure those metrics, they should say so up front and tell you what needs to be set up first.

Red flag answer: "We'll see when we look at conversions overall." That's vibes, not measurement.

4. "What's the most recent test you ran that failed, and what did you learn from it?"

Real CRO people have a graveyard of dead hypotheses, and they remember the failures specifically — including what the data said and what they updated their mental model on. The failure recall is the credential.

Red flag answer: "All our tests usually work." That either means they don't run real tests or they only run tests they know will win — both of which mean they're not running CRO.

5. "Show me a deliverable from a previous engagement."

Real answer: a research document with actual customer quotes, a hypothesis log, a test write-up with screenshots and stats, post-mortems on tests that didn't move the number. Real CRO engagements leave a trail.

Red flag answer: a generic CRO checklist PDF that could have been produced for any store. If the deliverable doesn't reference that specific store's data, it isn't a CRO deliverable.

If they refuse or fumble two or more of these, walk.

What you can do yourself (and probably should, first)

Most stores under $5M in revenue don't need a CRO agency yet. They need a real diagnosis of which problem is dominant on their store — and the cheapest real CRO work an operator can do is the self-diagnosis we walk through in the prior articles in this series:

- [INTERNAL LINK: Why your Shopify store gets traffic but no sales] — three modes (Wrong Audience / Missing Trust / Checkout Leak). 30-minute funnel diagnostic from your own Shopify Analytics.

- [INTERNAL LINK: Why your customers add to cart and disappear] — three abandoner buckets (Price-checkers / Pause-and-thinkers / Checkout-shocked). 15-minute test using your add-to-cart, reached-checkout, and reached-checkout-to-purchase rates.

- [INTERNAL LINK: Why your store feels like dropshipping] — 7 trust cues + a 5-minute self-audit.

Run these diagnostics first. Half the time the operator finds the dominant issue is something they can fix themselves in an afternoon — a shipping cost made visible on the product page, a real founder photo added to the About page, three review screenshots replacing five suspect-looking reviews. The other half of the time, the operator walks into a CRO conversation already knowing exactly what to ask the expert to focus on — which dramatically improves the odds the engagement is productive instead of generic.

The operators we've worked with who get the most out of paid CRO are the ones who showed up with a named problem and a working hypothesis, not the ones who showed up looking for a service to tell them what's wrong.

When to call for help

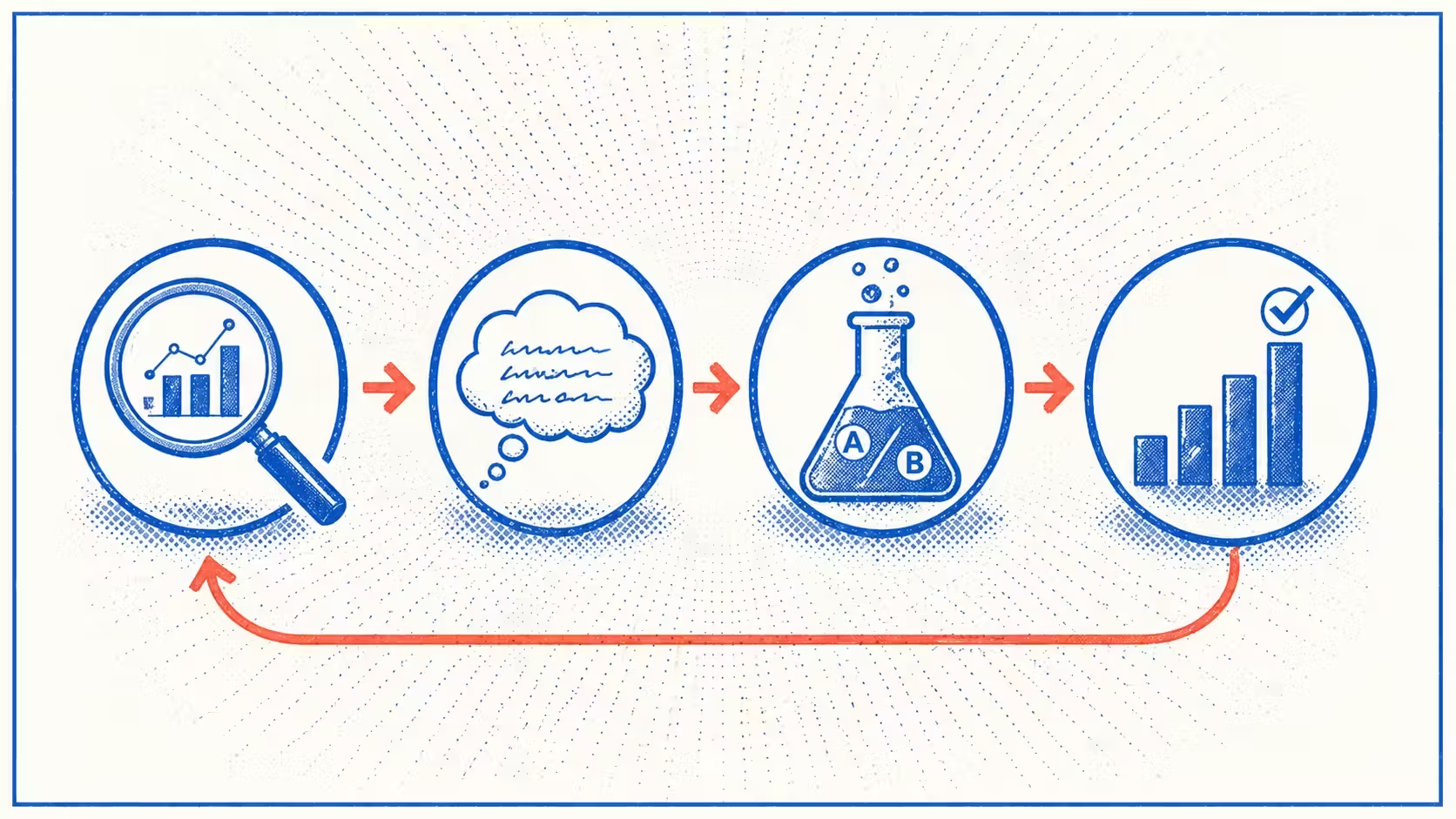

The diagnostic isn't the work. It's the first step of the work. Real CRO continues from "we know what's wrong" to "we test specific changes and roll forward the ones that work." That continued loop is where the conversion lift actually compounds.

The bottleneck operators hit when they try to maintain that loop themselves: it's hard to keep weekly attention on funnel data, and the dominant signal shifts faster than most operators can track manually. You fix the trust gap, the dominant issue moves to checkout shipping costs, you fix that, the dominant issue moves to a confused product page on a different SKU. Without consistent funnel-data attention, you're back to guessing.

That continuous diagnostic — running every day on your store, watching your funnel data, naming the dominant problem in plain English ranked by impact — is the work /cro automates. We connect to your Shopify, watch the funnel, and surface the dominant issue every day as a single named answer. "Your dominant issue this week: visitors are dropping off at the product page hero — bounce-from-PDP is up 8% since last week. Likely cause: a recent change to the product photo on your top-traffic page."

We're in pre-launch. Joining the waitlist gets you priority access and a free first diagnostic when /cro opens to the first cohort.